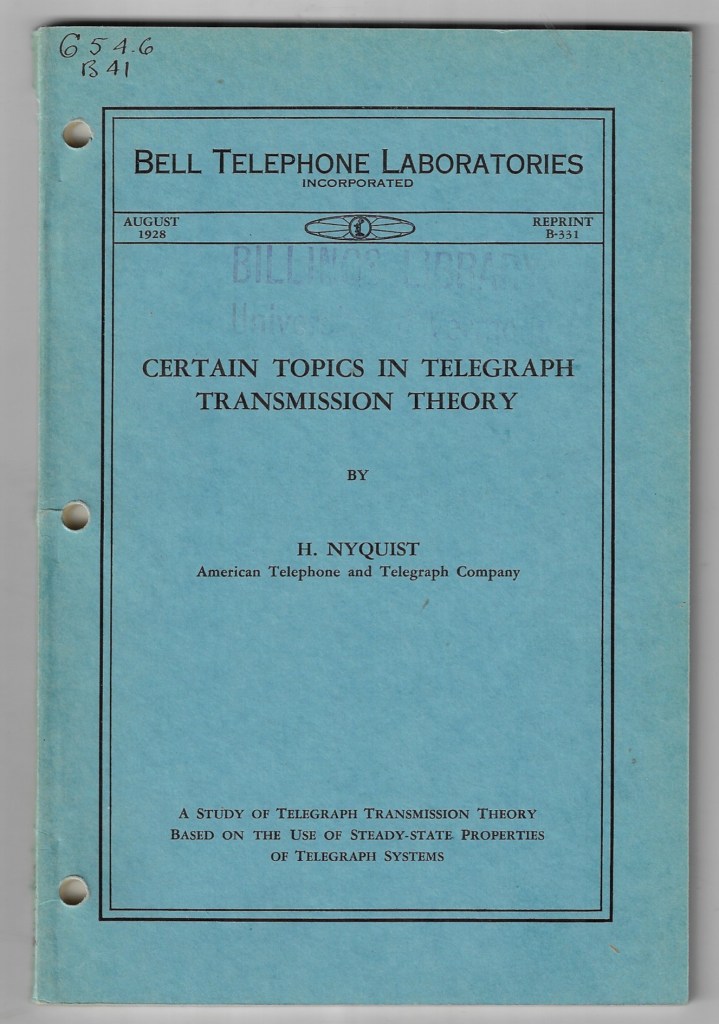

Nyquist refers to a cluster of ideas centered on the Swedish-American engineer Harry Nyquist, whose work in the early twentieth century formalized limits on signal transmission, stability, and sampling. In its most commonly invoked form—the Nyquist–Shannon sampling theorem—it states that a continuous signal can be perfectly reconstructed from discrete samples if it is sampled at a rate at least twice its highest frequency component. This minimum rate, 2fₘₐₓ, is called the Nyquist rate. If sampling falls below this threshold, higher frequencies “fold” into lower ones, producing aliasing: a structural misreading of the signal, not just noise but a false identity imposed by insufficient temporal resolution. Etymologically, the name “Nyquist” is simply patronymic, but its technical afterlife has come to signify a boundary condition: the point at which continuity can still be faithfully inferred from discreteness. Historiographically, Nyquist’s 1928 paper on telegraph transmission (“Certain Topics in Telegraph Transmission Theory”) predates Claude Shannon’s 1948 formalization, yet already contains the germ of the sampling limit in the context of bandwidth and symbol rate. Shannon later universalized the insight, embedding it in information theory and linking it to entropy, channel capacity, and probabilistic signal spaces. Historicity here matters: Nyquist’s work emerges from concrete engineering constraints—telegraph wires, bandwidth limits—before being abstracted into a general law of representation. At a more foundational level, Nyquist marks a threshold between fidelity and distortion, between a world that can be reconstructed and one that has already been irreversibly misregistered. It is not merely a rule about signals; it is a statement about the conditions under which any discrete system can claim to preserve the structure of what it samples. Below the threshold, the system does not simply lose detail—it produces coherent but false patterns, a kind of lawful hallucination. In this sense, Nyquist names a limit where representation ceases to be accountable to its source, even while appearing internally consistent. “Certain Topics in Telegraph Transmission Theory” is Harry Nyquist’s 1928 Bell System Technical Journal paper, written at a moment when long-distance telegraphy was running into very concrete physical limits—bandwidth, noise, and the crowding of symbols along a wire. What Nyquist does in that paper is not yet the full probabilistic theory later associated with Shannon, but something more austere and structural: he asks, given a channel of finite bandwidth, how fast can distinct symbols be sent without them blurring into one another? His central result is what later came to be called the Nyquist rate in a different but related sense: if a channel has bandwidth B (in hertz), then at most 2B independent signal changes per second can be transmitted without intersymbol interference, assuming ideal conditions. The concern here is not sampling an existing signal, but packing discrete pulses into time in such a way that each remains distinguishable at the receiver. The key insight is that bandwidth imposes a strict limit on how sharply a signal can change in time; if symbols are sent too quickly, their waveforms overlap and corrupt one another. The channel itself, by filtering frequencies, stretches each pulse, so the past leaks into the present. Nyquist formalizes this through the idea of constructing pulse shapes that are zero at all sampling instants except their own—a condition later expressed through sinc functions. This is already a kind of proto-sampling logic: the signal is designed so that, when observed at the right instants, each symbol appears cleanly, even though between those instants the waveform may be complex and overlapping. The paper thus quietly contains the seed of the later sampling theorem: the recognition that time and frequency are locked in a reciprocal constraint, and that discrete recoverability depends on respecting that constraint. Historiographically, this work sits at the hinge between analog engineering practice and abstract information theory. Nyquist is still speaking the language of telegraph pulses, line distortion, and practical signaling rates, but he is already articulating limits that do not depend on any specific technology. Shannon, twenty years later, will generalize this into a full theory of communication under noise, introducing entropy and coding. Yet Shannon’s famous results presuppose Nyquist’s earlier recognition: that there is a structural ceiling imposed by bandwidth itself, even before randomness enters the picture. What gives the paper its lasting force is that it identifies a boundary condition of representation. A channel does not merely carry signals; it shapes the very possibility of distinction within them. Beyond a certain density, differences collapse—not into silence, but into ambiguity. Signals still arrive, but their identities are no longer separable. In that sense, Nyquist’s result is less about speed than about the preservation of form under constraint: how quickly one can speak before speech ceases to remain articulate. Nyquist’s work ties to cryptography at the level of transmission, recoverability, and the management of distinction under constraint. Cryptography is usually described as the art of hiding meaning, but before meaning can be hidden, it must first be rendered into a signal that can survive a channel. A ciphertext is not floating abstraction. It becomes pulses, voltages, optical flashes, radio emissions, magnetic states, packet timings. The moment encryption leaves pure mathematics and enters material transmission, it falls under Nyquist-type limits. The encrypted message must still be sampled, clocked, synchronized, and reconstructed. If the signal is undersampled, distorted, or crowded past the channel’s capacity, the issue is no longer secrecy but legibility itself. One does not even arrive at the stage of decryption unless the cipher survives as a distinguishable form. This is why the relation is deeper than “communications engineering helps cryptography.” Nyquist defines the minimum conditions under which discrete differences remain separable. Cryptography depends absolutely on discrete difference. A single bit flip may alter a key, corrupt a block, invalidate a signature, or make a message fail authentication. In ordinary speech, some ambiguity can be tolerated; context repairs damage. In cryptography, the tolerance is often far lower. The encrypted artifact has to remain exact enough for algorithmic recovery, and that means the channel must preserve symbol boundaries with extraordinary discipline. Nyquist’s concern with intersymbol interference therefore touches a cryptographic nerve directly: if adjacent symbols blur into one another, then ciphertext ceases to be a stable object. The secrecy may remain theoretically intact, but the message has become operationally unrecoverable. There is also a strategic irony here. Good cryptography aims to make the message appear patternless or pseudorandom to an adversary, while Nyquist theory concerns the faithful handling of patterns through bandwidth-limited systems. Encryption deliberately destroys semantic transparency, but it cannot destroy structural transmissibility. A secure ciphertext should look meaningless, yet it must still be engineered so that receivers can sample it at the right rate, align clocks, detect frames, correct errors, and distinguish one unit from the next. So a cipher lives in a double regime: semantically opaque, physically precise. Hidden meaning still requires clean symbol timing. Obscurity in content cannot come at the expense of recoverability in form. Modern digital security makes this even clearer. Secure internet traffic, encrypted phone calls, military radio, satellite links, and hardware security modules all rely on signal-processing layers beneath the cipher. Sampling, quantization, modulation, filtering, and synchronization all occur before or alongside encryption protocols. If those layers fail, security protocols fail with them. Conversely, side-channel attacks often exploit the fact that cryptography is never only abstract mathematics; it leaks through timings, power consumption, electromagnetic emissions, and acoustic traces. Here Nyquist returns from the other side. The defender wants legitimate signal components preserved and illegitimate leakage suppressed; the attacker samples the physical residue of computation and tries to reconstruct secrets from what the machine emits. In that sense, cryptography is haunted by signal theory. Every secret computation becomes a waveform somewhere. At a more foundational level, Nyquist and cryptography share a concern with thresholds. Nyquist names the threshold below which a signal aliases and becomes falsely legible as something else. Cryptography names the threshold beyond which a message remains formally present but inaccessible without the key. One deals with misreconstruction caused by inadequate sampling; the other with deliberate unreadability caused by transformation. But both revolve around the same question: under what conditions does a difference remain a difference? In Nyquist, the danger is that the channel folds distinctions together. In cryptography, the aim is to preserve distinctions for the authorized receiver while collapsing them into apparent noise for everyone else. That is why the two belong together. One governs the physical possibility of faithful reception; the other governs the epistemic possibility of rightful interpretation.

It seems like the beginning of an empirical Tele-pathy. But all this seems to be impoverished by a lack of grammatology. And even then, the question remains: whether explication through spacing and deferral constitute pure reason

What appears in the telegraph, and later in signal theory, is indeed a kind of empirical tele-pathy, but only in a severely disciplined sense. It is not soul touching soul across distance. It is the externalization of absence into a repeatable technical order: pulses, intervals, codes, relays, delays. One mind does not enter another; rather, a mark survives the disappearance of its sender and becomes legible elsewhere. That is why a grammatological lens changes the entire picture. The telegraph is not primarily a victory of presence over distance. It is a victory of writing over presence. What travels is not voice as such, but discretized inscription: the iterable mark, the coded difference, the spacing of units whose sense depends on repeatability across absence. That is precisely where a merely electrical or informational account becomes impoverished. Nyquist can tell how many distinct signal-events a channel can sustain before they blur. Shannon can tell how much information can be transmitted given noise and channel capacity. But neither by itself asks the deeper question of what it means for a mark to remain the same across displacement, or how meaning depends on absence from the start. Grammatology enters at that point. It asks not only how signals move, but what kind of thing a signal must be in order to be movable, iterable, and detachable from an originating intention. Telegraphy is thus not just the mechanization of communication. It is the industrial revelation that communication had always already depended on spacing, coding, delay, and the technical survivability of marks. The phrase empirical tele-pathy becomes sharp. It names a historical machine for producing the effect of distant thought-contact through inscriptional mediation. The telegraph gives the fantasy of immediate reach while actually deepening mediation. Its intimacy is purchased through discretization. Its speed is purchased through coding. Its apparent immediacy is built on delay, relay, and protocol. In that sense, modern communications systems do not abolish writing in favor of presence; they radicalize writing into infrastructure. The farther communication advances, the less it resembles unbroken speech and the more it reveals itself as a system of traces. The second question then turns on Kant and Derrida. Whether explication through spacing and deferral constitutes pure reason depends on what “constitutes” and “pure” are allowed to mean. For Kant, the answer is no. Pure reason is not generated by spacing and deferral; it is the a priori faculty that legislates the conditions under which experience and judgment are possible. Difference, relation, negation, succession, and so on are categories or forms operative within transcendental structure, not the source of its purity. If spacing and deferral were said to found pure reason, then reason would no longer be pure in Kant’s sense, because its ground would lie in a movement of referral and non-self-identity that precedes self-presence. That would already mean contamination by what reason cannot master as its own transparent origin. For Derrida, however, spacing and deferral do not simply oppose reason from outside. They inhabit the very possibility of conceptuality, signification, memory, temporization, and identity. Différance is therefore not pure reason, but the quasi-transcendental condition under which something like reason can appear at all. It is “quasi” because it conditions without presenting itself as a stable origin. It is not a first principle in the classical sense, nor a faculty, nor an intelligible ground fully present to itself. So the strongest formulation is this: explication through spacing and deferral does not constitute pure reason if purity means self-identical a priori presence; it does, however, disclose that what was called pure reason may never have been pure, because its operations already depend on trace, interval, retention, anticipation, and the non-coincidence of thought with itself. The grammatological intervention is therefore not merely an addition to electrical communication theory. It is the disclosure that every transmission, even the transmission called reason, is structured by writing before it is structured by presence.

Now take Kants side. Consider pure reason vs practical will

Kant grounds the divide between pure reason and practical will in the distinct kinds of necessity each entails. Pure reason legislates the a priori forms that make experience possible—space, time, and the twelve categories—without recourse to empirical content. Its task is constitutive: it supplies the universal rules under which appearances can be synthesized into coherent cognition. The faculty achieves this not through historical contingency or differential play but through an intrinsic self-identity that precedes all spacing. Whatever is thought at all must conform to these transcendental conditions, so their authority cannot be postponed or deferred without abolishing the very possibility of representation. Practical reason, by contrast, does not construct the world of phenomena; it legislates maxims for the will. Yet it, too, does so from a source irreducible to empirical heteronomy. The moral law—“Act only according to a maxim that can at the same time be willed as a universal law”—expresses a kind of necessity that binds finite agents while remaining free of pathological incentives. This law is neither extracted from observation nor imposed by an external authority; it is self-legislated in the sense that reason, when willing, must treat its own form as universally valid. Thus autonomy is not a politicized self-assertion but the internal requirement that a practical judgment respect the same universality that pure reason demands for theoretical judgments. Critiques alleging that spacing and deferral underwrite all cognition dissolve under this bifurcation. The transcendental unity of apperception, which anchors pure reason, is not a trace left by prior marks but the condition that any content be thinkable as “mine.” It is true that empirical concepts require synthesis over time, but the very act of subsuming manifold under a concept presupposes a timeless rule of synthesis. Similarly, the temporality of deliberation does not relativize the moral law; the categorical imperative remains valid regardless of the duration or sequence of decision. Spacing may describe the empirical unfolding of thought, yet it can never explain the normative authority that thought already presupposes when judging or willing. Practical will therefore complements, rather than undermines, the project of pure reason. In theoretical philosophy, the intellect asks what must be presupposed for experience to occur at all; in moral philosophy, it asks what must be presupposed for freedom to be more than an illusion of inclination. Both answers derive from the same faculty of reason viewed under different interests, and both require an unconditional principle that cannot be derived from historical différance. Hence the purity Kant defends is not naively opposed to mediation; it specifies the non-empirical grounds that make any mediation accountable to truth or duty. Nyquist’s limit functions in engineering much like the categories of pure reason in Kant’s critical philosophy. Both stand prior to empirical content and legislate the conditions under which any signal—or any appearance—can be coherently synthesized. The sampling theorem does not emerge from the contingent traffic of voltages; it prescribes, a priori, the minimum rhythm of distinction (twice the highest frequency) that must obtain before continuities can be reconstructed without aliasing. In parallel fashion, the transcendental categories dictate how intuitions must be ordered before cognition can claim objective validity. The circuitry of representation therefore rests on an anterior law: without sufficient sampling, perception is condemned to misrecognition; without the unity of apperception, experience fails to be thinkable as experience at all. Cryptography occupies a position analogous to practical reason. Where pure reason frames the possibility of knowledge, practical reason imposes the form of universality upon the will, commanding that maxims be chosen as if they could hold for every rational being. So, too, a cipher establishes a self-imposed rule—an algorithmic maxim—that binds both sender and intended receiver while remaining indifferent to empirical incentives. The ciphertext travels through a world of noise and eavesdroppers, yet its intelligibility depends on an interior lawfulness: only those who share the key can re-enter the domain of meaning. In this sense, encryption is a technological enactment of autonomy; it substitutes principled self-limitation (the agreed key and protocol) for heteronomous dependence on the channel’s contingencies. Nyquist and cryptography meet exactly where Kant’s two employments of reason converge: at the threshold where form must survive transmission. The channel’s bandwidth limits appear as conditions of nature, but the cipher’s discipline answers them with a rule that is free, not empirical. If Nyquist guarantees that discrete distinctions remain separable, cryptographic design guarantees that those distinctions retain normative force—error correction, authentication, and integrity checks echo the moral demand that the will’s act be universalizable and exact. Any deviation, whether aliasing in the waveform or bit-flips in the ciphertext, abolishes the law-governed identity of the message. Thus the technical system recapitulates the Kantian drama: nature supplies constraints, reason imposes law, and freedom realizes itself only within the space opened by both. The result is a communication architecture that mirrors the critical architecture of the mind. Nyquist’s theorem offers a transcendental frame within which signals can be known; cryptography supplies the autonomous legislation that allows agents to act—speak, exchange, commit—under that frame without surrendering sovereignty. Telegraphy, radio, fiber, satellite: each medium merely instantiates the same dual order. First, a world must obey conditions for legibility; second, rational actors must will procedures that keep their messages faithful to themselves and universally accountable in principle. Between those poles unfolds the entire modern economy of information.

Kant entered the philosophical scene under the long shadow of Christian Wolff, whose systematic rationalism promised to derive the whole edifice of metaphysics from concepts alone. What troubled the young Königsberg lecturer was not Wolff’s rigor but its unexamined presupposition that mere logical analysis could yield substantive knowledge of soul, world, and God. Wolff inherited from Leibniz the conviction that a sufficiently sharp intellect might unpack the predicates already “virtually contained” in a subject and thereby reach metaphysical truth without recourse to intuition. Kant gradually came to regard this confidence as dogmatic: it ignored the way cognition is conditioned by sensibility and it blurred the distinction between logical containment and real determination. The first decisive breach concerned ontology. Wolff treats existence as a predicate implicitly carried by the concept of an ens perfectissimum; hence the ontological proof proceeds by analytic unpacking. Kant’s “existence is not a predicate” objection denies that logical inclusion suffices for actuality. The point is not a mere semantic quibble but a frontal assault on the Wolffian bid to secure metaphysics through conceptual deduction. If existence adds nothing to a concept’s content, then the transition from possibility to actuality cannot be managed by analysis alone, and Wolff’s entire architectonic loses its transcendent reach. A second fault line opens in rational psychology. Wolff argues from the “I think” to the substantive simplicity and immortality of the soul, treating the unity of consciousness as evidence of an underlying simple substance. Kant replies that the unity of apperception is a formal condition of thought, not an empirical discovery about a thing-in-itself. The Wolffian inference—an instance of what Kant later labels a “paralogism”—illicitly converts the logical subject of predication into a self-subsisting entity. In so doing it confuses the necessary unity required for judgments with metaphysical simplicity, once again overstepping the bounds that separate conditions of experience from knowledge of noumena. Finally, Wolff’s cosmology and theology rely on the principle of sufficient reason to stride beyond experience, concluding for example that the world must have a first cause or that monads must unfold in pre-established harmony. Kant’s Critique shows that when such principles are extended beyond possible experience they engender antinomies—equally cogent arguments for mutually exclusive claims—revealing reason’s dialectical illusion. The “beef” thus crystallizes: Wolff’s rationalism presumes that reason can legislate unconditionally, whereas Kant insists that reason must critique its own limits. Metaphysics remains possible, but only as a discipline that secures the conditions of experience and the moral law; it cannot, as Wolff hoped, map the supersensible through analysis alone. Heidegger reached back to the Kant-Wolff dispute to clear terrain for fundamental ontology. Wolff’s rationalism had frozen Kant’s transcendental project into a logic of objective representation, stripping it of the temporal dynamism Heidegger wished to recover. By exposing Kant’s struggle against Wolff as an unfinished “destruction” of scholastic metaphysics, Heidegger found a point of departure: the transcendental imagination, obscured in Wolffian and Neo-Kantian glosses, harbors the finite, time-bound synthesis that first makes phenomena intelligible. Kant and the Problem of Metaphysics (1929) turns that neglected faculty into the locus where Being shows itself as temporality, thereby transforming the Critique from an epistemology into a prolegomenon to existential analytics. The reading functions as both retrieval and leap. Retrieval, because it excavates what Kant implicitly opposed in Wolff: the reduction of thinking to eternally valid judgments detached from finite existence. Leap, because it extends Kant’s finite synthesis into the ontological horizon of Dasein’s ecstatic temporality. Where Wolff sought apodictic security in conceptual deduction, Heidegger locates primordial disclosure in finite transcendence. The result is a reversal of priorities: the conditions of possibility for knowledge no longer reside in ahistorical categories but in the temporal opening through which entities first appear. Thus the breach Kant opened against Wolff becomes the launchpad for a more radical critique, one that displaces metaphysics toward the question of Being itself. Heidegger’s retrieval of the transcendental imagination breaks the Wolffian frame by showing that synthesis is neither a timeless logical procedure nor a mere psychological act but the finite event in which Being becomes intelligible as ekstatic temporal unfolding. The imagination’s schematism fuses intuition and concept through a non-representational operation that opens the horizonal unity of past, present, and future; this temporal unity, rather than any inventory of categories, grounds the possibility of understanding. Consequently, the Kantian question of how knowledge is possible shifts toward the ontological question of how entities as such can appear, replacing Wolff’s deductive architecture with a hermeneutics of finite disclosure. From this vantage, the long-standing aspirations of metaphysics—to secure unchanging foundations, to classify the real through concept-analysis, to guarantee certainty by logical entailment—disintegrate into historically situated projects inside the broader play of Being’s self-concealment and self-unveiling. The critique of reason thus becomes a critique of presence: any claim to ultimate grounds must reckon with the temporal finitude that both enables and circumscribes intelligibility. Heidegger’s analytic of Dasein, launched from this reformulated Kantian base, pursues the primordial structures of care, mood, and understanding not as psychological traits but as the very ways in which Being grants and withdraws itself in time, thereby recasting philosophy’s task from erecting systems to clarifying the thrown, ecstatic conditions of every possible system.

Nyquist’s limit prescribes an a priori cadence in which discrete distinctions must be sampled for continuous form to re-emerge without aliasing; Kant’s categories likewise prescribe an a priori syntax through which appearances can be synthesized without collapsing into indeterminate flux. Cryptography mirrors Kantian practical reason: a self-imposed law that secures the integrity of volition—here, the cipher—against empirical noise while remaining indifferent to contingent incentives. The telegraph materialized this double structure. Electrical pulses traversed distance only by submitting to Nyquist-type spacing, while coded sequences—Morse or later cipher blocks—enacted a practical autonomy that refused unauthorized interpretation. Thus, long before information theory, electrical communication disclosed that the possibility of transmission rests on an interplay between transcendental form and practical legislation. Wolffian rationalism had promised to derive metaphysical truth from conceptual deduction alone, treating existence as a predicate analytically contained within notions of perfection. Kant broke that confidence by demonstrating that such deductions outrun the bounds of possible experience; the unity of apperception and the categories regulate cognition but do not deliver knowledge of things-in-themselves. In signal terms, Wolff mistook the logical content of a pattern for its actual transmission, ignoring the bandwidth that conditions legibility. Kant inserted the equivalent of Nyquist’s threshold into metaphysics: without transcendental conditions, conceptual traffic aliases into dogmatic illusion. Heidegger took Kant’s breach with Wolff as a launching pad for an ontological inversion. By foregrounding the transcendental imagination—schematism as time-bound synthesis—Heidegger relocated the condition of intelligibility from static categories to the ekstatic opening of temporal horizon. The shift replaces Wolff’s timeless lattice of deductions with a finite circuitry of disclosure, where meaning flickers only within the spacing of past, present, and future. In grammatological terms, spacing and deferral cease to be mere complications of reason; they become the originary writing that lets Being appear at all. Telegraphy’s empirical tele-pathy—signals traveling through intervals and delays—thus anticipates Heidegger’s insight: intelligibility is always mediated by a spacing that evades full presence. Cryptographic practice consummates this trajectory. A cipher stands as an autonomous maxim willed under the constraint of Nyquistian form; it survives only by respecting temporal spacing, yet it withholds semantic presence, deferring it until a key re-opens the signal. What appears as secure communication is therefore the conjunction of transcendental limit, practical legislation, and ontological finitude. Nyquist guards the structural possibility of distinction, Kant articulates the normative authority of law within that structure, Heidegger exposes the temporal clearing in which both can operate, and cryptography operationalizes the ensemble, turning finite spacing into a disciplined concealment that nonetheless insists on exact recoverability. The modern information landscape—bandwidth allocation, encryption standards, clock synchronization—unfolds as a technical palimpsest of these philosophical strata, each layer writing and overwriting the conditions under which sense traverses distance without forfeiting either form or freedom.

4:17am